梯度裁剪

类似于球形投影,使梯度的 L2 范数不超过阈值。

训练

注意训练时候每个批量的隐状态是分别存储的,不会相互影响。

- 多久一次 backward?

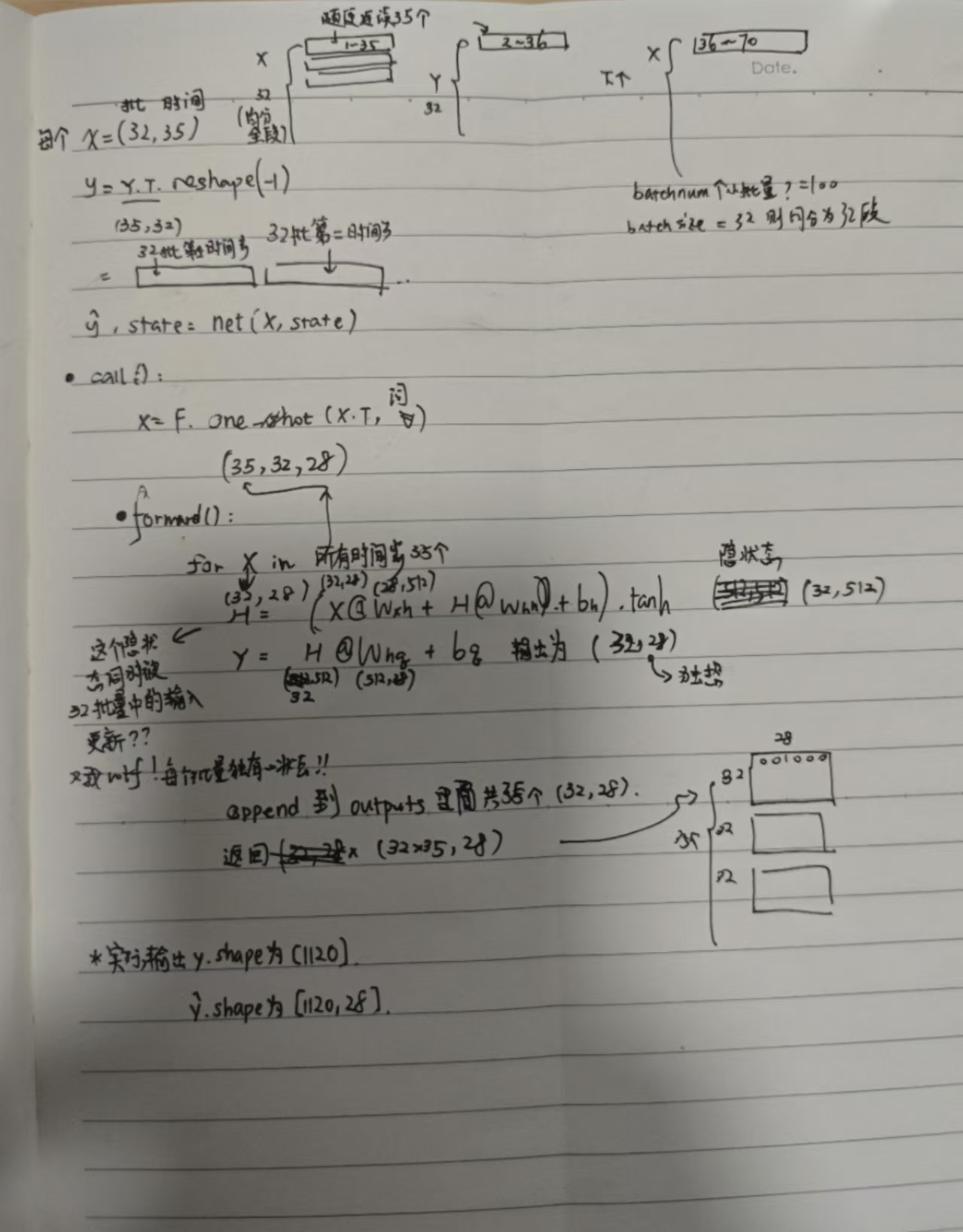

- 要将一个批量内所有样本的所有时间步(在程序开始时设定,比如样本 size = 32,单次输入时间步 num_steps 为 35)forward 以后(每次 forward 仅进行一个时间步的预测),才进行 backward。因此权重参数矩阵会累乘,容易导致梯度爆炸,故进行梯度裁剪。d2l 代码中,梯度裁剪在 backward 后,updater 之前执行。

- 注意对每个批量而言,每次输入仅有一个 token,并非多个时间步 token 同时输入。

手绘

2:15:13

手动代码

1import math2import torch3from torch import nn4from torch.nn import functional as F5from d2l import torch as d2l6

7batch_size, num_steps = 32, 358train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)9

10# 获取初始参数11def get_params(vocab_size, num_hiddens, device):12 num_inputs = num_outputs = vocab_size13 def normal(shape):14 return torch.randn(size=shape, device=device) * 0.0115 # 隐藏层参数145 collapsed lines

16 W_xh = normal((num_inputs, num_hiddens))17 W_hh = normal((num_hiddens, num_hiddens))18 b_h = torch.zeros(num_hiddens, device=device)19 # 输出层参数20 W_hq = normal((num_hiddens, num_outputs))21 b_q = torch.zeros(num_outputs, device=device)22

23 # 附加梯度24 params = [W_xh, W_hh, b_h, W_hq, b_q]25 for param in params:26 param.requires_grad_(True)27 return params28

29# 前向传播 forward30# 一次 forward 就将各个批量的所有时间步都生成完了31def rnn(inputs, state, params):32 # inputs的形状:(时间步数量,批量大小,词表大小)33 W_xh, W_hh, b_h, W_hq, b_q = params34 H, = state35 outputs = []36 # 循环每个时间步37 for X in inputs:38 # X的形状:(批量大小,词表大小)39 # 注意这里隐状态包含所有批量各自的隐状态40 # H 形状: (批量大小,隐藏单元个数)41 H = torch.tanh(torch.mm(X, W_xh) + torch.mm(H, W_hh) + b_h)42

43 # 本步输出不会影响下一步输出,只有隐状态才会44 # Y 形状: (批量大小,词表大小),因为前面已经指定 num_outputs = vocab_size45 Y = torch.mm(H, W_hq) + b_q46 outputs.append(Y)47 # 返回一个元组,即所有时间步的输出 y 和最后一个隐状态48 return torch.cat(outputs, dim=0), (H,)49 # torch.cat 输出为 (ns * n, n_vocab)50

51def init_rnn_state(batch_size, num_hiddens, device):52 return (torch.zeros((batch_size, num_hiddens), device=device), )53

54# 模型封装55class RNNModelScratch:56 def __init__(self, vocab_size, num_hiddens, device,57 get_params, init_state, forward_fn):58 self.vocab_size, self.num_hiddens = vocab_size, num_hiddens59 self.params = get_params(vocab_size, num_hiddens, device)60 self.init_state, self.forward_fn = init_state, forward_fn61

62 def __call__(self, X, state):63 X = F.one_hot(X.T, self.vocab_size).type(torch.float32)64 return self.forward_fn(X, state, self.params)65

66 def begin_state(self, batch_size, device):67 return self.init_state(batch_size, self.num_hiddens, device)68

69num_hiddens = 51270net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,71 init_rnn_state, rnn)72state = net.begin_state(X.shape[0], d2l.try_gpu())73

74# 预测75def predict_ch8(prefix, num_preds, net, vocab, device):76 """在prefix后面生成新字符"""77 state = net.begin_state(batch_size=1, device=device)78 outputs = [vocab[prefix[0]]]79 get_input = lambda: torch.tensor([outputs[-1]], device=device).reshape((1, 1))80 for y in prefix[1:]: # 预热期81 _, state = net(get_input(), state)82 outputs.append(vocab[y])83 for _ in range(num_preds): # 预测num_preds步84 y, state = net(get_input(), state)85 outputs.append(int(y.argmax(dim=1).reshape(1)))86 return ''.join([vocab.idx_to_token[i] for i in outputs])87

88# 梯度裁剪89def grad_clipping(net, theta): #@save90 """裁剪梯度"""91 if isinstance(net, nn.Module):92 params = [p for p in net.parameters() if p.requires_grad]93 else:94 params = net.params95 norm = torch.sqrt(sum(torch.sum((p.grad ** 2)) for p in params))96 if norm > theta:97 for param in params:98 param.grad[:] *= theta / norm99

100# 训练一个 epoch101def train_epoch_ch8(net, train_iter, loss, updater, device, use_random_iter):102 """训练网络一个迭代周期(定义见第8章)"""103 state, timer = None, d2l.Timer()104 metric = d2l.Accumulator(2) # 训练损失之和,词元数量105 for X, Y in train_iter:106 if state is None or use_random_iter:107 # 在第一次迭代或使用随机抽样时初始化state108 state = net.begin_state(batch_size=X.shape[0], device=device)109 else:110 if isinstance(net, nn.Module) and not isinstance(state, tuple):111 # state对于nn.GRU是个张量112 state.detach_()113 else:114 # state对于nn.LSTM或对于我们从零开始实现的模型是个张量115 for s in state:116 s.detach_()117 y = Y.T.reshape(-1)118 # y.shape: (ns * n)119 X, y = X.to(device), y.to(device)120 y_hat, state = net(X, state)121 l = loss(y_hat, y.long()).mean()122 if isinstance(updater, torch.optim.Optimizer):123 updater.zero_grad()124 l.backward()125 grad_clipping(net, 1)126 updater.step()127 else:128 l.backward()129 grad_clipping(net, 1)130 # 因为已经调用了mean函数131 updater(batch_size=1)132 metric.add(l * y.numel(), y.numel())133 return math.exp(metric[0] / metric[1]), metric[1] / timer.stop()134

135# 训练多个 epoch136def train_ch8(net, train_iter, vocab, lr, num_epochs, device,137 use_random_iter=False):138 """训练模型(定义见第8章)"""139 loss = nn.CrossEntropyLoss()140 animator = d2l.Animator(xlabel='epoch', ylabel='perplexity',141 legend=['train'], xlim=[10, num_epochs])142 # 初始化143 if isinstance(net, nn.Module):144 updater = torch.optim.SGD(net.parameters(), lr)145 else:146 updater = lambda batch_size: d2l.sgd(net.params, lr, batch_size)147 predict = lambda prefix: predict_ch8(prefix, 50, net, vocab, device)148 # 训练和预测149 for epoch in range(num_epochs):150 ppl, speed = train_epoch_ch8(151 net, train_iter, loss, updater, device, use_random_iter)152 if (epoch + 1) % 10 == 0:153 print(predict('time traveller'))154 animator.add(epoch + 1, [ppl])155 print(f'困惑度 {ppl:.1f}, {speed:.1f} 词元/秒 {str(device)}')156 print(predict('time traveller'))157 print(predict('traveller'))158

159num_epochs, lr = 500, 1160train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu())